Because Google is the world's most widely used search engine, ranking well on Google is quite essential. It commands a staggering 92% of the market share. Google analyses users' search queries to identify the websites that provide the best quality and relevance and then displays those results.

If your page is ranked high enough to appear on the top page of Google, it will instantly create more traffic and, as a result, more business or visibility.

Positioning on the pages of a search engine results page (SERP) is an essential part of search engine optimization (SEO) (search engine result page). To be considered an SEO expert, one needs to have a comprehensive understanding of the Google algorithm as well as the Google ranking factors.

Continue reading for some new Google search ranking variables implemented in 2022 and will be going forward to 2023:

Optimizing your website to achieve the highest possible ranking in organic search engine results is called search engine optimization (SEO) (and yes, you can aim for the first page). When discussing search, we refer to search results that are not paid for with the phrase "organic," which stands for "natural." These contrasts paid results derived via pay-per-click (PPC) advertising.

Your website's organic ranking on Google is established by an algorithm that considers various features and SEO metrics; these criteria determine your rating.

Let's start by going over the different categories of Google ranking factors before we get into the top factors you can optimize your website pages for:

- On-page ranking criteria refer to the quality of the material currently on the page and the keywords it targets.

- Backlinks are the most important component of off-page ranking considerations, which can be considered recommendations from other pages on your site or other websites.

- Your website's capacity to be crawled, indexed, and render material swiftly and safely for searchers is one of the considered technical ranking elements.

- Local ranking considerations include all three aspects, emphasizing listings, reputation, and reviews.

- No ranking criteria will make or break your search engine optimization (SEO). Instead, it is the sum of all your technical, on-page, and off-page efforts that, when combined, will help you get a higher ranking on Google, increase the amount of traffic you receive, and establish credibility.

Google BERT (Bidirectional Encoder Representations from Transformers) Algorithm Update

BERT is a machine learning framework that is open source and designed for natural language processing (NLP). BERT is a tool that was developed to assist computers in understanding the meaning of ambiguous language in written form by using the text located around it to construct the context. The BERT framework was pre-trained using the help of text from Wikipedia, and it can be fine-tuned with the help of question-and-answer datasets.

Transformers is a deep learning model in which every output element is connected to every data item, and the weightings between them are dynamically calculated based on their relationship. BERT, which refers to Bidirectional Encoder Representations from Transformers, is based on Transformers. (This process stage is referred to as attention in NLP.)

Historically, language models could only read text input sequentially, left-to-right or right-to-left, but not simultaneously. This meant that the models could only read either left-to-right or right-to-left. Because it may be read either way at the same time, BERT is not like other reading systems. The invention of Transformers made it possible to achieve this feature, referred to as bi-directionality.

BERT is pre-trained on two distinct but related natural language processing (NLP) tasks: masked language modelling and next-sentence prediction. This bidirectional capacity is utilized to do this.

CIS (Center for Internet Security) Update

The Center for Internet Security (CIS) published best-practice security guidelines for many different systems. An excellent security posture can be supported by following the Container-Optimised OS CIS Benchmark guidelines.

Why Duplicate Content is Harmful to your Website

You create duplicate content whenever you purposefully reuse the same text in different locations across your website and social media platforms. When investigating allegations of plagiarism, website proprietors frequently discover that a rival website has published information that has been plagiarized.

These are some examples of duplicate content that comes from outside sources. It refers to publishing on one website content that is an exact copy of that published on another website.

Internal content duplication refers to creating multiple versions of the same material on your website. This may be done on purpose in some instances; a site owner may recycle a carefully crafted value proposition in several different locations throughout the website.

In some instances, duplicate content is produced internally due to boilerplate text. Take, for instance, shopping at an internet retailer. To populate one hundred product pages with text, you must use either a template or an automated procedure. The template will likely place the boilerplate copy on each page.

The issue arises when that content needs to be adequately revised on each page, resulting in multiple pages with the same text except for a unique product blurb.

This kind of repetition can extend to a website's metadata tags and URLs, which can cause search engines to become perplexed and lead to erroneous pages being returned in the results of a search.

According to Google's official policy, the search engine does not penalize websites with the same material. However, it does filter duplicate content, which results in the same negative effect as a penalty: a drop in ranks for your website's pages.

Google is thrown off by the same material, which compels the search engine to decide which of two identical pages to place higher in the search results. It does not matter who created the material; there is a significant chance that the page that was initially published will not be the one that appears at the top of the search results.

Why EMD (Exact Match Domain)is most Important in SEO

Suppose you want to effectively remain on the top rankings of the most prominent search engines. In that case, you will need to understand the many optimization difficulties in the online world in this modern day and age. In the modern world of the web, tackling the many different kinds of complicated optimization procedures can be an extremely challenging task many times. The exact match domain is an example of one of these challenging and difficult optimization approaches (EMD).

An exact match domain, also known as an EMD, is a domain name that corresponds directly to the services offered by the company. This type of domain name reflects searched-for terms and commonly assists in driving traffic to the website. Take, for instance, the case in which the name of your website's domain is. This particular EMD technique is used as a time-saving shortcut to reach the top of the search engine results page.

Google Freshness Algorithm: Does Fresh Content Impact SEO?

The Freshness Algorithm of Google selects and presents to users, in response to specific search queries, the information that has been most recently updated and is most relevant. Pages with less traffic but more recent material are pushed further down the search engine results page (SERP) to make room for older, less relevant pages that once had a high rating.

How Does Google's Helpful Content Update Impact SEO?

The beneficial content update that Google released is a signal for the entire website. It goes after websites with relatively large content, either unsatisfactory or unhelpful, and where the content was prepared with search engines in mind first and foremost. Google wants to reward better and more valuable material written for people and to assist users.

A topic that has been popping up more and more frequently across social media and other sectors is material written to rank in search engines. This type of content may be labeled "search engine first content." In a nutshell, internet users are becoming increasingly annoyed when their searches lead them to web pages that are of no use to them but rank well in search results due to deliberate optimization efforts.

Why Links Analysis Are Important for SEO?

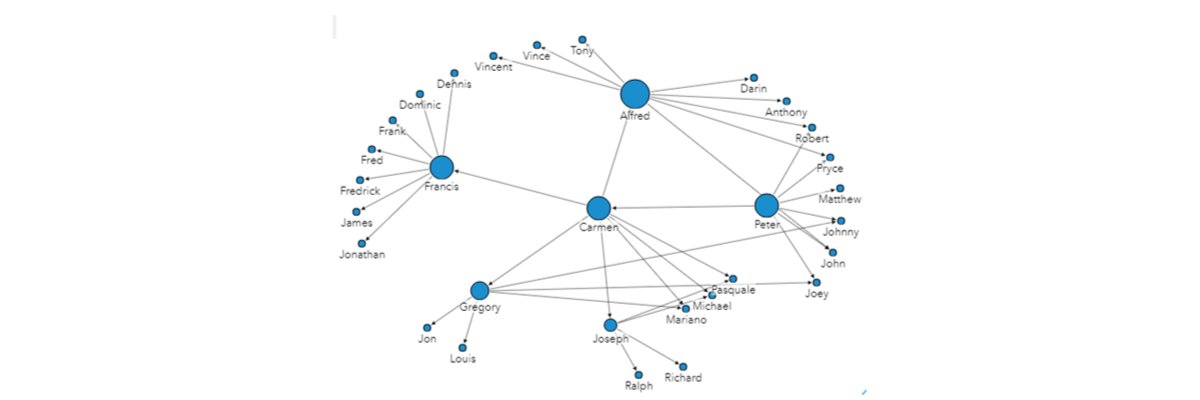

Link analysis is a method of data analysis that focuses on the interconnections and interactions within a dataset. Calculating centrality metrics, such as degree, betweenness, proximity, and eigenvector, is one of the things you can do using link analysis. Using a link chart or link map, you can also visualize the relationships between nodes.

Page Rank Algorithm and Implementation

Google Search employs a ranking system known as PageRank (PR) to order the webpages that are returned in search engine results. Larry Page, one of Google's founders, inspired the naming of the PageRank algorithm. PageRank is a method for determining the relative relevance of individual websites. PageRank is a method for roughly estimating a website's level of importance calculated by calculating the number and quality of links that point to a specific page. The fundamental presumption is that more significant websites will likely receive more links from other websites.

Is Google's MUM (Multitask Unified Model) A Search Ranking Factor?

In June of 2021, the Multitask Unified Model (MUM) update was finally made available to users. It is a multimodal algorithm aimed at overcoming difficulties associated with language usage and enhancing users' search experiences. This algorithm can take complicated queries and provide a single, actionable result without requiring numerous searches. It accesses material written in various languages, photographs, videos, and audio to accomplish this. Since its inception, Google has consistently ranked among the most important search engines utilized by people all over the world.

Google is continuously looking for new ways to enhance its user experience and services to maintain its position as an industry leader. Google has made thousands of adjustments to its search algorithm throughout its history. These algorithm updates are focused on improving the experience of the user. Changes to algorithms significantly influence how your company will appear in digital marketing searches.

Removal-based demotion system

Google has regulations that make it possible to remove specific categories of information. However, we take it as an indication that we need to enhance our outcomes if we have to handle a significant number of deletions of this kind affecting a certain location. In particular, the elimination of legal information and personal information.

What is Google Page Experience Update?

These metrics aim to determine how a user will perceive the experience of a particular web page, such as whether or not the page loads quickly, if it is mobile-friendly, runs on HTTPS, the presence of intrusive ads, and if content jumps around as the page loads. Google has a thorough developer document on page experience criteria, but these metrics seek to determine how a user will perceive the expertise of a specific web page.

What Does Google Passage Ranking Mean for SEO?

Thanks to a passage ranking feature, Google can identify the subjects of individual passages on the same page and score them independently. For example, imagine you wanted to search "how to set up your AT&T router." You would type that into a search engine. Initially, it's possible that the articles that provided a general overview of the subject were the ones that dominated the top-ranking results.

How Product Reviews Help Boost Ranking

Participation in the Product Rating program allows you to display compiled ratings of your wares to people shopping on Google. In addition, product ratings are included in paid advertisements and free product listings. These ratings range from one to five stars and represent the total number of reviews for the rated item.

The recent tweak to the "Product Reviews" algorithm on Google has significant repercussions for a very particular subset of the information available on the internet, namely product review articles, and blogs. And with the roll-out of this algorithm comes additional thorough instructions for marketers and businesses regarding what constitutes good content and how to develop it.

Why Does Original Content Matter for SEO?

Content never seen before is said to be "original." Also, unlike reposting, sharing, or curating content from another source or organisation, this is content you've created yourself. You can spread a mixture of original and curated material, especially social media

There is a wide range of originality in published material. Blog postings illustrate this well because they typically provide a new angle on an existing concept or event. A higher value is attached to content resulting from original research or distinctive thought leadership. Original content is at its best when it is well-researched and tailored to the target audience's needs. Displaying original, high-quality material, rather than rehashing someone else's ideas, is a hallmark of this publication. The more value it provides, the higher it will be ranked, the more people will share it, and the more attention and respect your brand will receive.

Understanding Google Rank Brain And How It Impacts SEO

The RankBrain component of Google's core algorithm is responsible for determining which search engine queries return the most relevant results by employing machine learning, which is the capacity of machines to educate themselves based on the inputs they are given.

Before the introduction of RankBrain, Google relied on its standard algorithm to decide which results to display in response to a certain query.

Reliable information systems

Many individuals turn to Google Search whenever they have a query, whether to learn more about a topic or verify a friend's alleged knowledge of obscure sports statistics. Google receives billions of daily requests because users know they will most likely obtain information that is both useful and trustworthy.

Google's ability to provide a superior search experience is central to the company's usefulness. Google has always been ahead of the competition because of its excellent grasp of the quality of web material, something that has been true ever since the company released the PageRank algorithm.

But many wonders: what exactly do you mean by "quality," and how do you figure out how to ensure that the information people see on Google can be trusted?

Their strategy for ensuring high-quality data may be broken down into three main parts.

First, they construct their rating systems to pinpoint data that users are most likely to find relevant and trustworthy.

In addition, Google has implemented various Search features that assist you in making sense of the material you're viewing online and give you instant access to information from authorities like health organisations and government agencies.

Google has rules regarding the type of content displayed in Search features. These rules exist to ensure that users only see relevant and valuable results.

Using these three strategies, Google can keep pushing the search boundaries and ensuring that users everywhere have a positive and reliable experience.

What is Site Diversity Update Analysis?

Link diversity refers to utilizing a variety of specific backlinks to leave a completely random footprint on the internet, one that the Google algorithms are unable to identify and are consequently unable to penalize a website for. Eliminating potential barriers is another way that link diversity helps streamline the ranking process.

What is the Link Spam Update

Google implements continuous modifications to its spam filter to keep the quality of its search results high. The purpose of Google's spam updates is to enhance the performance of its automated algorithms, which are always active in the background and are designed to identify spam in search results.

According to Google's definition of spam, it is very difficult to commit the crime of spam without being aware of it. Google has a very narrow definition of what it regards to be spam. Most of what it classifies as spam are low-quality websites that deceive people into installing malware or disclosing their personal information.

Local Search Ranking Update

A local system account is a type of user account generated by an operating system during setup and used for tasks specific to that OS. User identifiers used for system accounts are often predetermined (e.g. the root account in Linux.)

Some fuzziness exists between system accounts and service accounts. Many system accounts function like service accounts in that they run OS processes. System administrators also use other system accounts, such as the root account, to access the system.

You can restrict access to a single machine (workstation or server) using a local system account. When a user logs into a local account, the machine uses their credentials (username, password, and SID/UID) to verify their identity.

A service is granted full access to the machine's hardware and software when executed under the local system account. Thus, exercising caution while configuring services to use the local system account is crucial. For instance, a service operating as "LocalSystem" on a DC would be granted full permission to access AD DS. Therefore, the whole network is vulnerable to any service weaknesses.